I am trying to fetch Composite extraction request data from Data Scope Select using Python and trying to generate gzip csv file but i am only getting Authentication back as result and not getting the result data for Instument. I am trying to test with one ISIN currently.

Currently i am getting error as :

HTTP status of the response: 400

NameError: name 'jobId' is not defined

I have also attached my Python example just to know if i am providing something wrong in the request.

Do we already have any working Python example for Composite extraction request ?

Below is how i am providing Composite extraction request in Python :

requestUrl = reqStart + '/Extractions/ExtractRaw'

requestHeaders = {

"Prefer": "respond-async",

"Content-Type": "application/json",

"Authorization": "token " + token

}

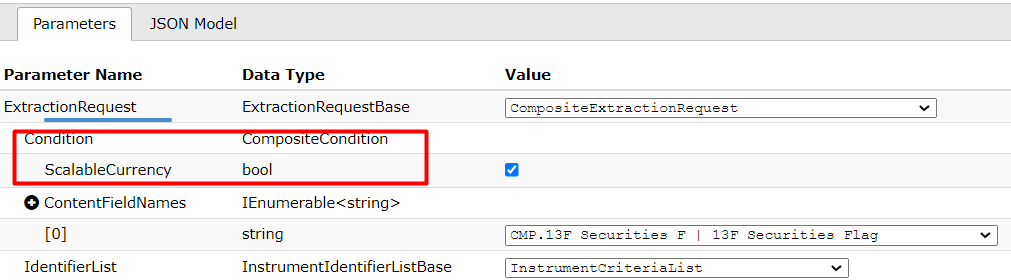

requestBody = {

"ExtractionRequest": {

"@odata.type": "#DataScope.Select.Api.Extractions.ExtractionRequests.CompositeExtractionRequest",

"ContentFieldNames": [

"ISIN", "Announcement Date"

],

"IdentifierList": {

"@odata.type": "#DataScope.Select.Api.Extractions.ExtractionRequests.InstrumentIdentifierList",

"InstrumentIdentifiers": [{

"Identifier": "DE000NLB1KJ5",

"IdentifierType": "Isin"

}]

},

"Condition": {

}

}

}

r2 = requests.post(requestUrl, json=requestBody, headers=requestHeaders)

r3 = r2

# Display the HTTP status of the response

# Initial response status (after approximately 30 seconds wait) is usually 202

status_code = r2.status_code

log("HTTP status of the response: " + str(status_code))

# If status is 202, display the location url we received, and will use to poll the status of the extraction request:

if status_code == 202:

requestUrl = r2.headers["location"]

log('Extraction is not complete, we shall poll the location URL:')

log(str(requestUrl))

requestHeaders = {

"Prefer": "respond-async",

"Content-Type": "application/json",

"Authorization": "token " + token

}

# As long as the status of the request is 202, the extraction is not finished;

# we must wait, and poll the status until it is no longer 202:

while (status_code == 202):

log('As we received a 202, we wait 30 seconds, then poll again (until we receive a 200)')

time.sleep(30)

r3 = requests.get(requestUrl, headers=requestHeaders)

status_code = r3.status_code

log('HTTP status of the response: ' + str(status_code))

# When the status of the request is 200 the extraction is complete;

# we retrieve and display the jobId and the extraction notes (it is recommended to analyse their content)):

if status_code == 200:

r3Json = json.loads(r3.text.encode('ascii', 'ignore'))

notes = r3Json["Notes"]

log('Extraction notes:\n' + notes[0])

# If instead of a status 200 we receive a different status, there was an error:

if status_code != 200:

log('An error occured. Try to run this cell again. If it fails, re-run the previous cell.\n')

requestUrl = requestUrl = reqStart + "/Extractions/RawExtractionResults"

# AWS requires an additional header: X-Direct-Download

if useAws:

requestHeaders = {

"Prefer": "respond-async",

"Content-Type": "text/plain",

"Accept-Encoding": "gzip",

"X-Direct-Download": "true",

"Authorization": "token " + token

}

else:

requestHeaders = {

"Prefer": "respond-async",

"Content-Type": "text/plain",

"Accept-Encoding": "gzip",

"Authorization": "token " + token

}